Utilizing Laser Technology in Dimensional Metrology Applications

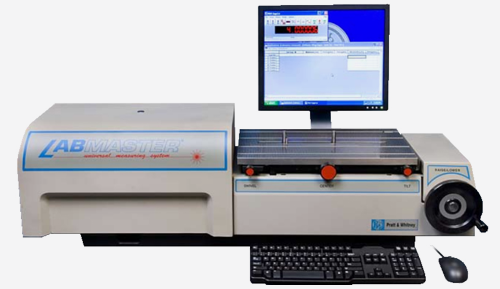

Using LabMaster® laser technology in dimensional measuring applications is fast, accurate, and traceable. This paper will discuss its theory of operation, associated uncertainties, as well as justification to procure such an instrument. Topics include:

- Why LabMaster Instruments use Lasers

- Helium-Neon Laser and its Traceability

- Setting the Scale Factor (Mastering)

- Re-Datum Frequency

- Recommended Master Gage Blocks and Ring Gages

- Uncertainty Analysis

- Time Study / Measurement Cycle Times

- Justification / Return on Investment (ROI)

Why LabMaster Instruments use Lasers

The wavelength of light provides a very high resolution, linear, and stable reference for dimensional measurement. LabMaster instruments effectively couple the wavelength of light to the part to be measured. They do this by using a fringe counting laser interferometer1 to detect linear motion of a probe that contacts the part (a mechanical comparison). LabMasters employ patented laser paths that are in-line with the measurement axis to minimize Abbe error. The probes and associated optics are guided along very accurate low friction slides by precision force systems to minimize instrument error. Although direct gage block interferometers (wringing a block to a platen) use the same principles, LabMaster systems offer the advantages of a protected environment for the light path, direct readout, faster operation, lower cost, and the ability to measure many different parts. LabMasters have accuracy's equivalent to short-range comparators but offer increased productivity due to their wide direct reading range.

Although there are different methods for using a laser in measurement (such as Interferometry, triangulation, or scanning), Interferometry is typically orders of magnitude more accurate than other methods. LabMaster instruments use laser interferometers for the following reasons:

Resolution - LabMaster instruments resolve the wavelength of light to 0.1 micro-inch [2.5 nanometers].

Stability - The thermal coefficient of expansion for the laser wavelength is more than ten times smaller than that of steel. Unlike steel, however, the laser beam is slightly effected by changes in air pressure or humidity. The laser wavelength will change 1 part-per-million (1 micro-inch/inch) with either an air temperature change of 2 ºF [1 ºC], an air pressure change of 0.1 inch [2.5 mm] of mercury, or a relative humidity change of 30%.

Linearity - The wavelength of light is very linear and does not suffer from linearity errors inherent in LVDTs and glass scales.

Accuracy - Labmaster instruments insure accuracy and traceability by two different user selectable methods. A two point mastering method determines the scale factor and datum by using two traceable gage blocks and takes less than a minute to perform. A second method uses a traceable block to set the datum while the scale factor is determined by the wavelength of light (a physical constant). In the second method the wavelength of light is automatically calculated by using environmental information entered by the operator.

Helium-Neon (He-Ne) Laser and its Traceability

The question concerning the traceability to national standards of interferometers utilizing the 0,633 mm line of the Helium Neon (He-Ne) laser is one that has been faced before. It is the opinion of National and International Standard Institutes that such devices are apriori traceable, and that periodic calibration of the He-Ne laser is not required.

Their position is based on the following considerations:

The Comite Consultalif pour le Definition du Metre, the ultimate authority for the international definition of length, has accepted the 0,633 mm line of He-Ne laser as a secondary standard of length (Recommendation M1 1973). The physical principles of laser action preclude any He-Ne laser from producing light of a wavelength which differs from the accepted value of 632991.399 x 10-12m by more than 1 part in 106. Hence, for all technical purposes, a He-Ne laser which produces a beam realizes the international and U.S. standard of length to accuracy efficient to the needs.

Furthermore, when modern stabilization techniques are functioning they can reduce uncertainty to about 1 part in 109. They cannot malfunction and degrade the performance below the 10-6 level.

In view of these facts, national and international institutes do not provide routine calibration for laser interferometers. Calibration would not provide further assurance of in use functioning and the inevitable mistreatment of transport to and from laboratories would be counterproductive. All such devices are, by nature, considered traceable to national standards in all the usual context.

LabMaster instruments use a two frequency AC He-Ne laser system that involve modern digital electronics, software, and mechanical assembly. The software and electronics failure mode is catastrophic and is easily detected by use of built-in test circuits in modern interferometers.

Therefore, in order to have traceability from the LabMaster, only the master gages (such as blocks and rings) need to be calibrated and certified on a periodic basis. A ‘calibrate before use’ sticker can be placed on the LabMaster in order to satisfy ISO requirements.

Setting the Scale Factor (Mastering)

Accurate measurements can be made only after the LabMaster is mastered. Mastering involves the setting of a scale factor and a datum. Once a scale factor is determined, it is held in memory until overwritten.

The scale factor can be set in one mode and used in another (i.e. set the scale factor using gage blocks and use the same scale factor for measuring rings). The datum must be set for each mode (i.e. an internal master must be used to set the datum before measuring rings in the previous example).

There are two different methods for establishing a scale factor. Each method is independent of the other and will overwrite any previous scale factor. The two methods are the Two-Gage Block and the Environmental Variable Method.

Two Gage Block Method

The two-gage block method is the recommended method of mastering the instrument. It has the advantages of speed and accuracy. This method uses two master gage blocks (i.e. 0.1 inch and a 4.0-inch [25.4 mm & 101.6 mm]) to establish a scale factor. Beside the instrument uncertainty, the total uncertainty is mainly dependent upon the uncertainty of these masters as well as the master used for the datum. The LabMaster scale factor is established through patented technology of counting fringe patterns, somewhat similar to a typical laser system. However, instead of entering environmental data such as temperature, pressure, and humidity, this method utilizes a second master gage block to establish the scale factor over a long measuring range. The result is a fast, accurate, and cost affective method of providing traceability to known standards.

Environmental Variable Method

The environmental variable method (one gage block method) uses a single gage block as a datum. Through a software algorithm, the wavelength of the laser is establish using the following variables: temperature of the air inside the laser chamber, temperature of the part, ambient temperature, pressure, humidity, and the coefficient of expansion of the part material. The advantages of the environmental variable method are traceability to a known physical constant (the laser beam wavelength) and the elimination of the need for a second gage block as a second data point. The disadvantages are the need to enter seven pieces of information (including the datum), the need for traceability for each piece of information, and the fact that cosine errors are not compensated for (as in the two-gage block method). This method of setting the scale factor is typical of many laser systems and is beneficial in long length measurements where a second master (i.e. 20 inches and above) is neither available nor practical.

Re-Datum Frequency (Two Gage Block Method)

Even in the best-controlled laboratories, environmental changes such as temperature, pressure, and humidity are inevitable. Using the two-gage block method, pressure and humidity have a very small effect on the laser scale factor (see Stability - Why LabMaster Instruments use Lasers). In a typical calibration laboratory where the environment is stable within 1 °F [0.56°C] , the scale factor will change approximately 0.1 part per million (0.1 micro-inch/inch). Therefore, if the laboratory environment changes 1 °F [0.56°C] since the last time a 4.0-inch scale factor had been set; a 0.4 micro-inch error could exist. This error can be minimized, if not eliminated, by re-checking the scale factor at least once an hour, or in tightly controlled rooms, once a day.

The most critical environmental variable to control is room temperature fluctuations (gradients) which can shift the datum or reference point. This is more commonly known as "datum shift". LabMaster instruments minimize this shift by buffering its laser from environmental changes and by incorporating thermally stable composite materials into its design.

To further minimize datum shift, it is important that parts and master gages are on the same soak plate (physically close together) and enough soak time has been allowed for them to acclimate (2-24 hours depending on part size). Also, by applying proper measuring techniques such as sandwiching the readings (master, part1, part2…, master), datum shift will be minimized. For more information on measuring to millionths, please request Pratt & Whitney publication, Maximizing Accuracy in Micro-inch Measurement: © 1993.

The datum for any mode of operation (internal or external) can be reset at any time without affecting the scale factor. For example, a 6.0 inch dial caliper has an etched slide rule that is fixed and linear (consider this the scale factor on the LabMaster). Before accurate measurements are taken, the jaws of the caliper are brought together and the dial pointer is set to zero (consider this the datum). Since the calipers slide rule is inherently linear (similar to the scale factor), frequent checks and resetting of the dial pointer (datum) can be made without affecting the slide rule (scale factor).

To determine the re-datum frequency due to datum shift, it is recommended that a repeatability test be conducted within the laboratory where the LabMaster is to be used. This test should be conducted for each measurement application (ring, block, plug, etc.) because the time interval between part measurements will vary (i.e. 2 min./ring, 1 min./plug, etc.).

For example, after clamping a 1.0-inch [25.4 mm] gage block in place, take 10 repeat readings at 13-second intervals which is the approximate cycle time per block (See Time Study to Determine Measurement Cycle Times). To aid in this, LabMasters software program GageCal™, can automatically cycle the probes using the 'Automatic Measuring' icon. The readings are then automatically recorded. This test is repeated at random intervals during an 8 hour period (typical working day), all the while making sure that the room conditions are typical of daily operations. After reviewing the data, the worse case results are used to determine the re-datuming frequency. *Note: In this example, only the environmental component of the data is analyzed (mainly temperature and to a lesser extent, vibration).

| Reading 1 | 1.0000000 inch |

| Reading 2 | 1.0000003 inch |

| Reading 3 | 1.0000005 inch |

| Reading 4 | 1.0000001 inch |

| Reading 5 | 1.0000010 inch |

| Reading 6 | 1.0000020 inch |

| Reading 7 | 1.0000035 inch |

| Reading 8 | 1.0000042 inch |

| Reading 9 | 1.0000051 inch |

| Reading 10 | 1.0000066 inch |

In the example above, the reading increased approximately 1 micro-inch [0.025 micron] after the 4th reading. This is most likely due to a temperature change of the block of 0.1 °F [0.56°C]. After the 5th reading, the block size went up another 1 micro-inch (temperature went up 0.2 °F). At the 10th reading, the block size had gone up a total of 6.6 micro-inches. (temperature went up a total of 1.0 °F from the start of the repeatability test). *Rule of thumb: 1.0inch steel, square, gage block heated 1.0 °F will increase the length of the block by 6.6 micro-inches.

Although you should never see such large changes so quickly, this shows the importance of periodically going back to the first datum point and if necessary, resetting it. Therefore, re-datuming compensates for room environmental changes such as temperature, pressure, and humidity during a given period of time. Also, setting a datum with a master of the same material/coefficient of expansion (i.e. steel, carbide) and like geometry as the measured part, elastic deformation errors are irrelevant. This furthermore minimizes errors associated with contact point deformation.

With this repeatability data, an operator can determine the amount of temperature variance in the laboratory thus determining a time frame for resetting the datum point at predefined intervals (sandwiching the readings). The better the laboratory temperature stability the less often the need to go back and check the datum point. Temperature uncertainty (thermal gradient uncertainty) for the LabMaster is therefore dependent on the collection of this type of data and the ability to compensate for it. Therefore, the temperature uncertainty is mainly dependent on the operator and procedure, not the LabMaster.

Recommended Master Gage Blocks and Ring Gages

Listed below are the total number of grand master gages needed for the LabMaster Universal. These masters represent the traceability for the LabMaster and should be of the highest grade possible (gage block grade 0.5 or 1, or class XX or XXX ring gage), of good quality, and preferably have a history to them. It is recommended that steel blocks and rings be purchased unless the majority of the measured parts are of a different material. Therefore, approximately 20 master gages are needed for the LabMaster to have complete traceability to a national laboratory. This is compared to maintaining certification of over 200 master gages that short-range comparators require.

| Gauge Blocks | Ring Gauges |

| 0.05 inch | 0.04 inch |

| **0.1 inch | 0.125 inch |

| **0.1 inch | 0.25 inch |

| **0.2 inch | **0.1 inch |

| 3.0 inch | 2.0 inch |

| 4.0 inch | 3.0 inch |

| 7.0 inch | 4.0 inch |

| *10.0 inch | *7.0 inch |

| *13.0 inch | *10.0 inch |

| * for long length measurements | |

| ** quantity of two | |

To have the lowest total uncertainty possible, it is recommended that the master gages be sent directly to a national laboratory (i.e. NIST). If this is not practical, they should be sent to a commercial laboratory that has direct traceability themselves. Sending masters directly to a national laboratory will ensure not only having the lowest uncertainty but also peace of mind.

If it is decided to send the masters directly to NIST, certification could take as long as 4 to 6 months and costs start at $50/block and $500/ring. For future NIST re-calibrations, call and reserve a time slot before sending in the masters. It would also be beneficial to 'cross pollinate' these masters to a secondary set. This can be done on the LabMaster and could prove helpful when your grand masters are out for re-certification.

If you plan on measuring other gage materials such as carbide or ceramic, it would be beneficial to purchase at least a few master gage blocks and/or ring gages of the same material. Once the LabMaster scale factor has been set, using a master datum of the same coefficient (carbide, ceramic, etc) and geometry (block, ring) shifts the scale factor accordingly thus canceling errors associated with contact deformation and temperature variances.

Additional Notes:

- Gage blocks with end caps can be substituted for master ring gages to set an internal datum when measuring rings. Gage blocks with end caps have the advantage of lower uncertainties; however, errors associated with improper wringing and contact deformation errors (lack of same contact geometry) need to be accounted for. This sometimes makes the total uncertainty of using gage blocks as your internal datum greater than using master ring gages.

- Master ring gages have the advantages of like geometry and ease of use (lack of wringing blocks), however; master ring gages tend to have higher uncertainties. This can be minimized by sending your master ring gages directly to a national laboratory (i.e. NIST).

- One master ring gage (approximately 1.0” [25.4mm]) is necessary for setting an internal datum when measuring internal threads on the LabMaster Universal.

Uncertainty Analysis

LabMasters have an instrument (machine) uncertainty of 2.0 + 0.5L micro-inches [0.05 + 0.5L/1000 microns] (± 2 standard deviations). The length (L) variable is the size of the part being measured (units are inches/mm). Also, LabMasters have an instrument (machine) repeatability of 1.6 micro-inches [0.04 microns] (± 2 standard deviations). *Note: Just recently, it is becoming more common to drop the term "instrument uncertainty" and replace it with three specific sources of uncertainty of the instrument. These sources of uncertainty that deal with the instrument are master gage calibration, scale calibration, and instrument geometry uncertainty.

As a direct reading machine (long-range comparator) there is a level of confidence of 95% that the true value will not exceed ± 2 micro-inches [± 0.05 microns] plus any uncertainty associated with the length (L) from the datum point. For more critical measurements, the LabMaster can be used as a short-range comparator thus dropping the 0.5L factor and lowering the instrument uncertainty to less than 2 micro-inches [0.05 microns].

For optimal performance, it is recommended that the environment be stable within 0.5 °F [0.25 °C], 0.05 in. Hg [1.5mm Hg], and 25% relative humidity. *Rule of thumb: most calibration laboratories try to maintain a nominal temperature of 68 °F [20 °C] and less than 50% relative humidity.

All other sources of uncertainties (master gages, thermal gradients, etc.) are usually described within an uncertainty budget formula provided by a governing agency such as NIST. These agencies can typically supply you with publications that deal with uncertainties such as: NIST Technical Note 1297, "Guidelines for Evaluating and Expressing the Uncertainty of NIST Measurement Results" and NIST Volume 102, Number 6, "Uncertainty and Dimensional Calibrations". Another publication is the ISO, "Guide to the Expression of Uncertainty in Measurement.”

In other words, the uncertainty of the master gages as well as any other uncertainties needs to be accounted for to determine the total uncertainty for the measurement process. This can be achieved through ones' own gage repeatability and reproducibility testing (GR&R) as well using industry estimations for other types of uncertainties as outlined in technical publications.

To determine a worse case total uncertainty for the LabMaster (two gage block method), the following formulas are provided. *Please note: these formulas are very conservative and should only serve as a guide in establishing worse-case scenarios and should not replace individual GR&R testing.

Estimating Total Uncertainty

Datum Uncertainty:

Ud = (Um^2 + (2 micro-inch)^2)^0.5

Ud = datum uncertainty (micro-inch)

Um = uncertainty of master gage block used to set datum (micro-inch)

Note: Where 2 micro-inch is from instrument uncertainty (2 micro-inch + 0.5L micro-inch) where L=0.

Scale Factor Uncertainty:

Us = (Um1^2 + Um2^2 +8 micro-inch)^0.5 / (L2 – L1) + 2micro-inch / inch / °F x dT

Us = scale factor uncertainty (micro-inch / inch)

Um1 = uncertainty of block 1 (micro-inch)

Um2 = uncertainty of block 2 (micro-inch)

L1 = length of master block 1 (inch)

L2 = length of master block 2 (inch)

2 micro-inch / inch / °F = machine uncertainty as a function of temperature normalized to per inch basis.

dT = room temperature variation during test

Note: Where 8 is the sum squared value of two instrument uncertainties with L set to 0.

Total Uncertainty:

U = Ud + Us [L – Ld]

U = total measurement uncertainty

Ud = datum uncertainty (micro-inch)

Us = scale factor uncertainty (micro-inch)

L = Length of part being measured (inch)

Ld = datum point (inch)

*Helpful hints: To lessen the total uncertainty, perform a two point mastering with the same probes being used in the measurement. For example, when measuring ring gages, perform a two point master with two master internal diameters and forgo the Datum Uncertainty. The same holds true when using flat probes (anvils) or any other type of probes. Also, when measuring gages using the bi-directional probes (OD), there is no need to set a datum because the first point acts as the datum.

Measurements Outside Two Masters:

If it is necessary to measure outside the two master points, the following formula resultant can be added to the calculated total measuring uncertainty.

Uo= ((Um1 + Um2) x D) / (P2 - P1)

Uo = added uncertainty outside the master points (micro-inch)

D = distance of measurement from nearest calibration point (inch)

P1 = first master point (inch)

P2 = second master point (inch)

Um1 = uncertainty of first master (micro-inch)

Um2 = uncertainty of second master (micro-inch)

*Example: Labmaster has been mastered using a 1.0-inch [25.4 mm] and 4.0-inch [101.6 mm] gage block. The 1.0-inch block has 2 micro-inch uncertainty and the 4.0-inch block has 3 micro-inch uncertainty. Measurement is made at 5 inches [127 mm].

Uo = ((2 + 3) x 1.0) / (4.0 - 1.0) = (5 x 1.0) / 3.0

Uo = 1.6 micro-inch

Time Study / Measurement Cycle Times

A time study has been conducted on the LabMaster Universal that provides initial mastering time, accessory setup time, and cycle times for the more popular measuring applications. This information is helpful in calculating the re-datum frequency as well as determining ones' justification and return on investment (ROI) dollars.

Initial Mastering Time

Need only once 2:00 minutes

Accessory Setup Time

These times include mounting the accessories on the LabMaster and setting a datum.

Gage Block: 0:00 minutes (mastered w/blocks)

Ring Gage: 1:00 minute

Plug Gage: 0:00 to 2:00 minutes (depending on size)

Threaded Plug Gage: 1:45 minutes

Threaded Ring Gage: 2:30 minutes

Snap Gage: 2:15 minutes

Micrometer: 1:15 minutes

Dial Indicator: 2:00 minutes

Electronic Probe: 2:00 minutes

Cycle Times

The times below include fixturing the part (if necessary) to the LabMaster and taking the number of readings as noted.

Gage Block: 2:15 minutes/ 10 blocks (1 reading/block, shop blocks)

Ring Gage: 2:00 minutes/ 6 readings

Plug Gage: 1:00 minute/ 3 readings

Threaded Plug Gage: 2:30 minutes/ 2 readings

Threaded Ring Gage: 2:00 minutes/ 1 reading

Snap Gage: 1:00 minute/ 1 reading

Micrometer: 1:45 minutes/ 10 readings

Dial Indicator: 1:45 minutes/ 10 readings

Electronic Probe: 1:45 minutes/ 10 readings

Justification / Return on Investment (ROI)

The need to improve corporate bottom lines, measure tighter tolerances and comply with standards such as ISO 9000, QS-9000 and Z540-1 forces quality managers to continuously improve efficiencies.

In order to help meet these goals, organizations should replace older calibration equipment with newer, more efficient models. While stated accuracy or uncertainty is always major considerations in any such purchase, many other factors must be considered to get the best equipment for specific needs. This article examines high-end gaging products.

Begin by considering instrument uncertainty, repeatability and resolution. It's important to remember that instrument uncertainty and accuracy are not the same. Simply put, accuracy is the difference between a true value and a measured one. For example, a measuring device with 0.0005 inch (0.0127 mm) accuracy will provide readings that may be 0.0005 inch (0.0127 mm) in error.

On the other hand, an instrument uncertainty statement incorporates the use of statistical analysis to convey the probable error in a measurement. It includes the sensor's absolute accuracy, repeatability and resolution, as well as any variability introduced by the equipment's mechanics. This uncertainty is then used in conjunction with outside uncertainties, such as the environment or masters to establish an overall measuring uncertainty. Clearly, an instrument uncertainty statement contains more extensive mathematical calculations that better qualifies the instruments' measuring capability.

In the case of a measuring instrument, repeatability is a measuring device's inherent ability to consistently repeat its readings. A manufacturer's repeatability study is usually conducted when all other factors that would affect the result, such as the master and operator, are not changed. Although this is an important specification to consider, it should not be assumed that a highly repeatable system would also be accurate. Without an instrument uncertainty or accuracy statement, a repeatability statement alone provides no useful benefit.

Resolution refers to the number of trailing digits available on the display. It is also referred to as the smallest positional increment that can be seen. Once again, beware of dramatic product claims that only provide a resolution or repeatability statement. If the manufacturer's literature doesn't provide accuracy or instrument uncertainty information, have the company send it to you.

Next, consider the sensor technology used. For instance, high-accuracy gaging units use either a precision linear encoder or a laser-based interferometer. Linear encoders come in different grades and generally provide instrument uncertainties of 10 micro-inches (0.25 microns). Laser-based units, on the other hand, can routinely achieve results in the 2-3 micro-inch range (0.05-0.075 microns). Again, be sure that the accuracy specification you receive is for the complete unit, not just the sensor.

When considering accuracy, remember a phenomenon known as Abbe offset errors. The details of Abbe offset errors could fill up a separate article, but in simple terms the idea is that to minimize overall measurement error, the sensor and measurement axis must be in line. For example, dial calipers, where the measurement scale is offset from the measurement axis, are subject to Abbe offset errors. However, micrometers, where the measurement barrel is in line with the measurement axis, are not subject to Abbe offset errors.

Also evaluate the unit's style or configuration. Choices include direct reading units, which can make many different measurements within a specific range without re-mastering, and short-range comparators, which must be re-mastered for each size to be checked. For example, Pratt & Whitney's new Universal Supermicrometer has a direct reading range of 2 inch (50.8 mm) and a total measuring range of up to 11 inch (279 mm). This allows the operator to make many measurements quickly and accurately over a 2 inch direct reading range before repositioning the probes. For larger direct reading ranges, laser-based instruments provide direct readings well up to 64 inch (1,635 mm) in length.

Most calibration laboratories historically have relied on short-range comparators for precision measurements. These units, while relatively inexpensive to purchase, require substantially more labor than direct reading units to operate properly. For example, it's possible to calibrate an 81-piece gage block set in less than one-and-a-half hours using a direct reading unit. This same task can be done in less than eight hours using a short-range comparator. The labor cost savings achieved in gage block calibration using this method can fully justify an equipment purchase. In addition, direct reading units reduce the number of masters required. Short-range comparators require a separate master for each value measured; direct reading units only require an upper and a lower limit master to set a range to measure within.

Additionally, users can choose between dedicated internal (ID) or external (OD) machines, or those capable of doing both types of measurements. Units that do both offer many advantages. Besides the obvious cost savings associated with eliminating duplicated sensors, technicians must only become proficient with one machine. Combination units also require less bench space and allow one technician to quickly change from making OD to ID measurements.

Many other points should be evaluated to ensure that you get the right unit for your needs. Check both the units total range and the measuring table's size. Make sure it's big enough to accommodate large parts and that the table will handle the weight. The table should have integrated locating posts and T slots, which make part alignments and fixturing quicker. For ID measurements, make sure there are easy-to-use swivel, centering, tilt and elevation adjustments. When considering combination units, note whether the unit has bi-directional probes or uses a separate setup for each. Many units with separate ID and OD stations typically only observe the Abbe offset rule on one.

Ask for a Demo

Before making an important investment in calibration equipment, take a test drive. If possible, include the instrument operators. They can help quantify differences in setup times and throughput. If you're not able to check out a machine in person, ask for a video.

When testing the equipment, perform a gage repeatability and reproducibility study, an excellent indicator of whether an instrument will actually perform to your expectations. GR&R studies also are referred to as machine capability studies. A typical GR&R study might consist of 10 parts of similar characteristics being measured three times by two or three operators. Once the data has been collected, a measurement error analysis and percent tolerance analysis can be made on the parts being measured. Many gage management packages include a GR&R module to simplify these calculations.

Due to the versatility of combination ID/OD calibration systems, changeover from one setup to another sometimes becomes inevitable. When assessing throughput of a particular measurement, also address the time needed to switch over from one setup to another. For instance, when changing from outside dimensions to inside dimensions, do you need to change the contact probes, force dials or some other levers? This will definitely increase calibration time. Some multifunctional gages have bi-directional probes that allow both ID and OD measurement as well as an automatic force system that requires no adjustments at all.

The method of calibrating the unit (also know as "mastering") is something else to consider. Questions include: How long does the calibration take? How long can measurements be taken without going back to the set point? Does the system allow for a few different methods of calibrations?

Other points to consider: How long is the warranty? Does the manufacturer have a return policy if the instrument does not perform to stated specifications? Do the specifications state, as a minimum: instrument uncertainty, repeatability and resolution? Does the manufacturer have a reputation for quality products and good customer service?

Compare Accessories and Features

Manufacturers differ in the accessories they include with the unit. Because ID/OD machines are so versatile, nearly all of them include a host of optional accessories. The probes and fingers required to do all standard calibrations can add up to 20 percent of the purchase price. The best advice here is to get a list from the manufacturer that cross-references the necessary applications to the appropriate accessories needed to accomplish your measurements.

Multifunctional instruments also have come a long way with the features they incorporate and benefits they can deliver. During the past several years, the integration of computers has allowed operators to simply push a key or click a mouse to move contact probes. Equally impressive is the ability of some systems to send the measurement value to any user-specified program, such as Excel. Other desirable features include: Windows-based software, automatic tolerancing, serial and parallel output ports, built-in bi-directional force system, two-point calibration and a modular construction.

The Bottom Line

With nearly any product you buy, you get what you pay for. Just be careful about what you might be sacrificing for a less expensive product or model. An instrument costing $5,000 or $10,000 less might be lacking the speed and throughput that would have paid back this amount in a relatively short period of time.

Because metrology departments still carry the stigma of being non value-added, they sometimes have difficulty getting the appropriate funding for capital equipment. Writing a long, complicated justification is almost a necessity in order to get equipment that is long overdue. When writing justifications, remember the magic word: payback. Showing how the proper new equipment will improve overall quality, increase efficiency and lower costs should enable you to get the equipment that will maximize your in-house gage calibration capabilities.

About the author

Daniel J. Tycz is Product Manager for Pratt & Whitney Measurement Systems.

Pratt & Whitney is a leading manufacturer of precision dimensional metrology instruments. Product lines include: Supermicrometer®, LabMaster®, Labmicrometer®, Laseruler®, and Measuring Machines.

For more information please contact:

Pratt & Whitney Measurement Systems, Inc.

66 Douglas Street, Bloomfield, CT 06002

Tel (860) 286-8181

www.prattandwhitney.com

email: info@prattandwhitney.com

Definitions:

- Laser interferometer (fringe counting) - this type of interferometer literally counts the fringes caused by the interference of the two arms of the interferometer. Interference in an interferometer is caused by the adding and subtracting of the waves of light from the two separate paths in the instrument. Thus as the relative path lengths in the two interferometer arms are changed, bright and dark fringes are produced and counted.

- Abbe error (Error = L sin (theta)) if errors of parallax are to be avoided, the measuring sensor must be placed in line with the contact points where the length of the work piece is to be measured.

- Traceability - a paper trail, usually citing calibration reports, that demonstrates that the calibrated masters used in the calibration are connected back to national or international standards. Also, traceability now requires an uncertainty statement for the measurement result that forces an uncertainty chain

- Uncertainty of a measurement means doubt about the validity of the result of the measurement. Uncertainty of measurement is a parameter, associated with the result of a measurement that characterizes the dispersion of the values that could reasonably be attributed to the measurand. It can also be expressed as an estimate characterizing the range of values within which the true value of a measurand lies. When specifying the uncertainty of a measurement, it is necessary to indicate the principle on which the calculation has been made.

- Repeatability - is defined as the measurement variation of a gage or test equipment when used by one operator or under one set of environmental conditions.

Free USB

Free USB Product &

Product & Celebrating

Celebrating